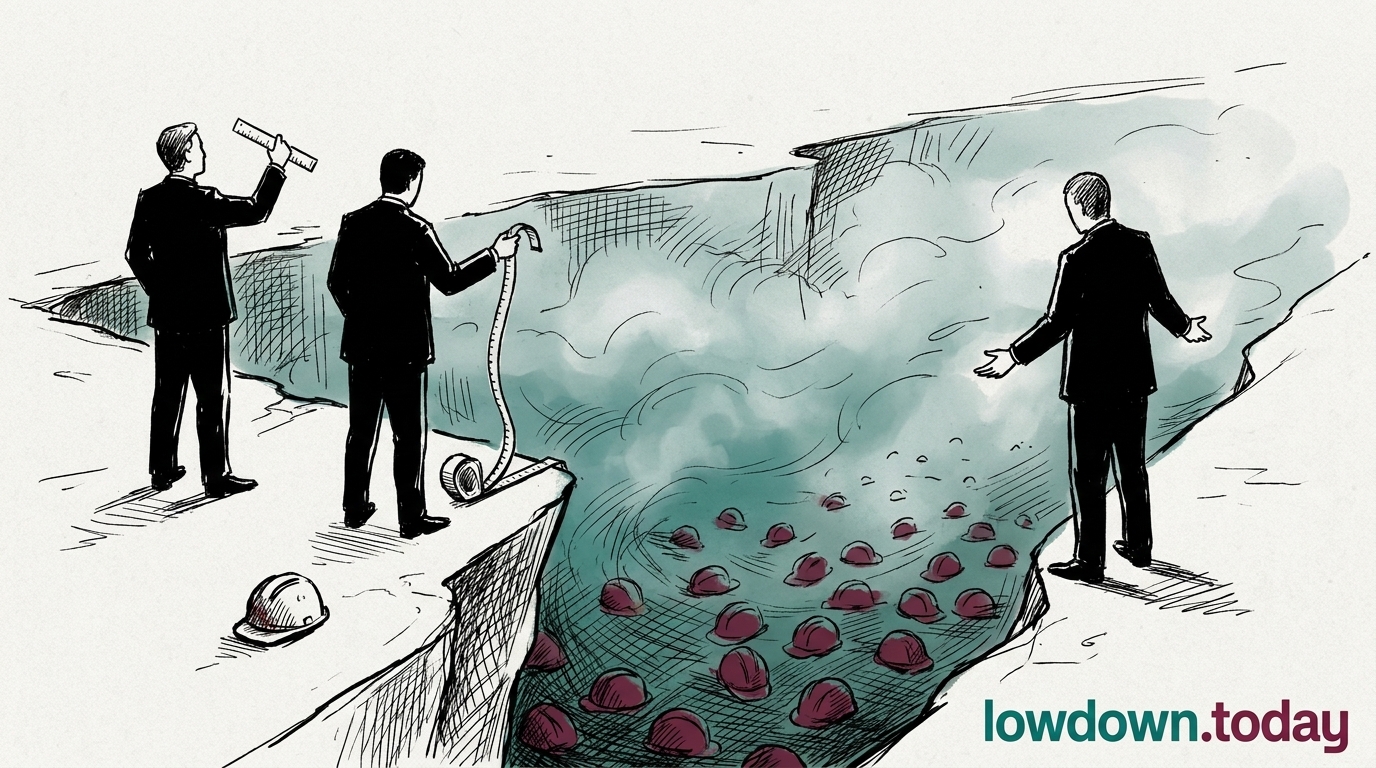

The Federal Reserve Board published a survey reconciliation paper on 3 April 2026 showing three separate federal instruments produced AI adoption rates of 18%, 41%, and 78% for the same late-2025 period — a 4.3x range depending on whether adoption is measured by firm (BTOS), individual self-report (RPS), or employment weight (SBU). Daily AI use sits at 12% of the workforce; weekly use at 35.2%.

This is the first federal acknowledgement that no canonical AI adoption measure exists. Without an agreed baseline, Congress cannot legislate against a figure, and the Hawley-Warner measurement coalition retains political legitimacy as the only realistic path to better data.