The Bank of England's Financial Policy Committee (FPC), in the record of its April 2026 meeting published on 10 April, directed the Bank of England and the Financial Conduct Authority (FCA) to undertake further work on agentic AI use in payments and financial markets. The FPC noted that 75% of UK financial firms now deploy AI and assessed that the systemic risk from agentic deployment is "likely to increase rapidly". The HM Treasury Committee has separately called for AI-specific stress tests and clearer FCA guidance by end of 2026.

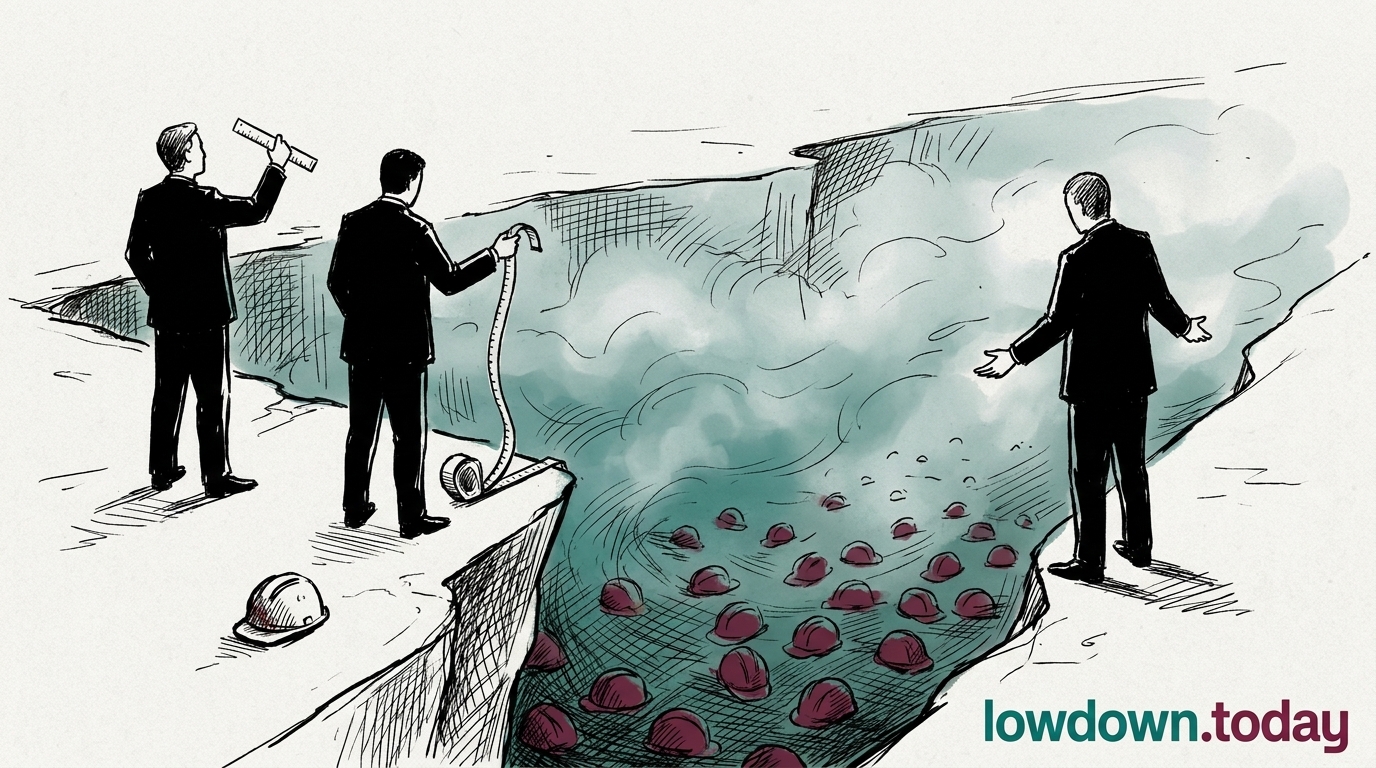

Agentic AI is the class of system able to take sustained autonomous action across multiple steps, the operational profile AISI separately evaluated on Mythos. The FPC's directive treats that capability as a financial stability concern rather than a product feature, and requires the FCA to develop supervisory tools calibrated to it. The OBR had already modelled a worst-case scenario of additional UK unemployment from AI displacement, with the Bank of England committed to stress-test an AI shock ; the FPC's April record moves the work from modelled worst case to formal supervisory mandate.

The directive sits alongside the Bessent-Powell emergency convening of 8 April as evidence that AI capability risk has entered financial-stability frameworks on both sides of the Atlantic. The UK is proceeding through institutional channels with a published record and a dated follow-up deliverable; the US convening was ad hoc, with no published readout and no scheduled agency response. Whether Mythos or its successors appear on the formal agenda of the next Financial Stability Oversight Council meeting is the nearest-term test of whether the US is running the same process informally.

For UK financial firms, the likely near-term consequence is a formal data request from the FCA, covering which agentic AI systems are in production, what oversight is in place, and how model failures propagate through payments flows. That supervisory layer does not exist in the US, where the agencies best-placed to build it are the same ones the Hawley-Warner coalition has spent six weeks asking to count AI displacement.